Blogs

Because sharing is caring

Knowledge Graphs with Juan Sequeda

Dive deep into the world of knowledge graphs with Juan Sequeda on the Agile Data Podcast, hosted by Shane Gibson. Explore key insights from Juan’s journey in computer science, his pivotal role in semantic web development, and the transformative power of knowledge graphs in data integration. Discover how these technologies are reshaping the landscape of data management and the exciting future prospects with the advent of Large Language Models (LLMs). Tune in to understand the practical applications, challenges, and the future of knowledge graphs in enterprise data strategy.

Data Storytelling with Kat Greenbrook

Explore the Art of Data Storytelling with Kat Greenbrook on the Agile Data Podcast. Dive into Kat’s transformative journey from aspiring vet to a data storytelling expert, and discover the power of the ABT (And, But, Therefore) narrative framework in conveying compelling data insights. Uncover common pitfalls and learn crucial differences between data visualization and storytelling. Enhance your business communication skills with practical tips and insights from ‘The Data Storyteller’s Handbook.’ Perfect for professionals in data analytics, business intelligence, and anyone keen to master the art of turning data into impactful stories.

Demystifying CDP’s vs. Data Warehouse’s

In this article we describe the concepts of Customer Data Platforms (CDP) versus Data Warehouses.

The magic of DocOps

TD:LR Patterns like DocOps provide massive value by increasing collaboration across team members and automating manual tasks. But it still requires a high level of technical skills to work in a DocOps way. For the AgileData App and Platform, we want to delvier those...

Iterations create milestone dates, milestone dates force trade off decisions to be made

Data teams struggle to not “boil the ocean” when doing data work.

Use milestones as a pattern to help the data team to focus on what really needs to be built and manage the trade-off decisions for what doesn’t.

Your data team are mercenaries, define your ways of working based on this

Modern data teams are transient, often staying less than 5 years, unlike past decades of long-term loyalty.

Companies should adapt by defining robust Ways of Working (WoW) that endure beyond individual tenures.

Balancing in-house teams with reliable data vendors for continuity and efficiency may also be a useful pattern as part of your WoW.

I’m getting pedantic about semantics

TD:LR Having a shared language is important to help a data team create their shared ways of working. When we talk about self-service, we should always highlight which self-service pattern we are talking about.I'm getting pedantic about semantics. In the Data Domain we...

The Art of Data: Visualisation vs Storytelling

Data visualization is like painting with data, using charts and graphs to make trends and patterns easy to understand. It’s great for presenting data objectively.

Data storytelling weaves a narrative around data, adding context, engaging emotions, and inspiring action. It’s perfect for persuading stakeholders.

Ways of Working with Scott Ambler

Join Shane Gibson on the Agile Data Podcast for an enlightening conversation with Scott Ambler, an IT and Agile expert. Delve into Scott’s journey from pioneering programmer to data architecture and Agile methodologies. Discover the evolution of Agile data, the importance of adapting ways of working, and the pitfalls of best practices. Learn valuable insights into continuous improvement, team dynamics, and the complexities of data quality in today’s fast-paced IT landscape. Don’t miss this episode for an in-depth exploration of Agile data and its impact on IT projects and processes.

AgileData App

Explore AgileData features, updates, and tips

Network

Learn about consulting practises and good patterns for data focused consultancies

DataOps

Learn from our DataOps expertise, covering essential concepts, patterns, and tools

Data and Analytics

Unlock the power of data and analytics with expert guidance

Google Cloud

Imparting knowledge on Google Cloud's capabilities and its role in data-driven workflows

Journey

Explore real-life stories of our challenges, and lessons learned

Product Management

Enrich your product management skills with practical patterns

What Is

Describing data and analytics concepts, terms, and technologies to enable better understanding

Resources

Valuable resources to support your growth in the agile, and data and analytics domains

AgileData Podcast

Discussing combining agile, product and data patterns.

No Nonsense Agile Podcast

Discussing agile and product ways of working.

App Videos

Explore videos to better understand the AgileData App's features and capabilities.

The challenge of parsing files from the wild

In this instalment of the AgileData DataOps series, we’re exploring how we handle the challenges of parsing files from the wild. To ensure clean and well-structured data, each file goes through several checks and processes, similar to a water treatment plant. These steps include checking for previously seen files, looking for matching schema files, queuing the file, and parsing it. If a file fails to load, we have procedures in place to retry loading or notify errors for later resolution. This rigorous data processing ensures smooth and efficient data flow.

The Magic of Customer Segmentation: Unlocking Personalised Experiences for Customers

Customer segmentation is the magical process of dividing your customers into distinct groups based on their characteristics, preferences, and needs. By understanding these segments, you can tailor your marketing strategies, optimize resource allocation, and maximize customer lifetime value. To unleash your customer segmentation magic, define your objectives, gather and analyze relevant data, identify key criteria, create distinct segments, profile each segment, tailor your strategies, and continuously evaluate and refine. Embrace the power of customer segmentation and create personalised experiences that enchant your customers and drive business success.

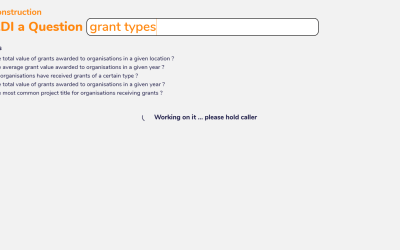

Fast Answers at Your Fingertips: Unveiling AgileData’s ‘Ask a Quick Question’ Feature

Immerse yourself in the magical world of data with AgileData’s ‘Ask a Quick Question’ capability. Perfectly designed for data analysts and business analysts who need to swiftly extract insights from data, this capability facilitates quick data queries and rapid exploratory data analysis.

The Hitchhikers guide to the Information Product Canvas

TD:LR In mid 2023 I was lucky enough to present at The Knowledge Gap on the Information Product Canvas. Watch The Information Product Canvas, is an innovative pattern designed to capture data requirements visually and...

Magical plumbing for effective change dates

We discuss how to handle change data in a hands-off filedrop process. We use the ingestion timestamp as a simple proxy for the effective date of each record, allowing us to version each day’s data. For files with multiple change records, we scan all columns to identify and rank potential effective date columns. We then pass this information to an automated rule, ensuring it gets applied as we load the data. This process enables us to efficiently handle change data, track data flow, and manage multiple changes in an automated way.

The patterns of Activity Schema with Ahmed Elsamadisi

In an insightful episode of the AgileData Podcast, Shane Gibson hosts Ahmed Elsamadisi to delve into the evolving world of data modeling, focusing on the innovative concept of the Activity Schema. Elsamadisi, with a rich background in AI and data science, shares his journey from working on self-driving cars to spearheading data initiatives at WeWork. The discussion centers on the pivotal role of data modeling in enhancing scalability and efficiency in data systems, with Elsamadisi highlighting the limitations of traditional models like star schema and data vault in addressing complex, modern data queries.

Unveiling the Secrets of Data Quality Metrics for Data Magicians: Ensuring Data Warehouse Excellence

Data quality metrics are crucial indicators in a data warehouse that measure the accuracy, completeness, consistency, timeliness, and uniqueness of data. These metrics help organisations ensure their data is reliable and fit for use, thus driving effective decision-making and analytics

Amplifying Your Data’s Value with Business Context

The AgileData Context feature enhances data understanding, facilitates effective decision-making, and preserves corporate knowledge by adding essential business context to data. This feature streamlines communication, improves data governance, and ultimately, maximises the value of your data, making it a powerful asset for your business.

New Google Cloud feature to Optimise BigQuery Costs

This blog explores AgileData’s use of Google Cloud, specifically its BigQuery service, for cost-effective data handling. As a bootstrapped startup, AgileData incorporates data storage and compute costs into its SaaS subscription, protecting customers from unexpected bills. We constantly seek ways to minimise costs, utilising new Google tools for cost-saving recommendations. We argue that the efficiency and value of Google Cloud make it a preferable choice over other cloud analytic database options.

Data as a First-Class Citizen: Empowering Data Magicians

Data as a first-class citizen recognizes the value and importance of data in decision-making. It empowers data magicians by integrating data into the decision-making process, ensuring accessibility and availability, prioritising data quality and governance, and fostering a data-centric mindset.

The patterns of Data Vault with Hans Hultgren

In a compelling episode of the Agile Data Podcast, Shane Gibson invites Hans Hultgren to explore the intricacies of Data Vault modeling. Hans, with a substantial background in IT and data warehousing, brings his expertise to the table, discussing the evolution and benefits of Data Vault modeling in today’s complex data landscapes. Shane and Hans navigate through the foundations of Data Vault, including its core components like hubs, satellites, and links, and delve into more advanced concepts like Same-As Links (SALs) and Hierarchical Links (Hals). They highlight how Data Vault enables flexibility, agility, and incremental development in data modeling, ensuring scalability and adaptability to change.

To whitelabel or not to whitelabel

Are you wrestling with the concept of whitelabelling your product? We at AgileData have been there. We discuss our journey through the decision-making process, where we grappled with the thought of our painstakingly crafted product being rebranded by another company.

Metadata-Driven Data Pipelines: The Secret Behind Data Magicians’ Greatest Tricks

Metadata-driven data pipelines are the secret behind seamless data flows, empowering data magicians to create adaptable, scalable, and evolving data management systems. Leveraging metadata, these pipelines are dynamic, flexible, and automated, allowing for easy handling of changing data sources, formats, and requirements without manual intervention.

Data Consulting Patterns with Joe Reis

Dive into the world of data consulting with Shane Gibson and Joe Reis on the Agile Data Podcast. Explore their journey from traditional employment to successful data consulting, covering client acquisition, business models, financial management, reputation, sales strategies, employee management, and work-life balance.

The Enchanting World of Data Modeling: Conceptual, Logical, and Physical Spells Unraveled

Data modeling is a crucial process that involves creating shared understanding of data and its relationships. The three primary data model patterns are conceptual, logical, and physical. The conceptual data model provides a high-level overview of the data landscape, the logical data model delves deeper into data structures and relationships, and the physical data model translates the logical model into a database-specific schema. Understanding and effectively using these data models is essential for business analysts and data analysts, create efficient, well-organised data ecosystems.

Shane Gibson – Making Data Modeling Accessible

TD:LR Early in 2023 I was lucky enough to talk to Joe Reis on the Joe Reis Show to discuss how to make data modeling more accessible, why the world's moved past traditional data modeling and more. Listen to the episode...

AgileData Cost Comparison

AgileData reduces the cost of your data team and your data platform.

In this article we provide examples of those costs savings.

Cloud Analytics Databases: The Magical Realm for Data

Cloud Analytics Databases provide flexible, high-performance, cost-effective, and secure solution for storing and analysing large amounts of data. These databases promote collaboration and offer various choices, such as Snowflake, Google BigQuery, Amazon Redshift, and Azure Synapse Analytics, each with its unique features and ecosystem integrations.

Data Warehouse Technology Essentials: The Magical Components Every Data Magician Needs

The key components of a successful data warehouse technology capability include data sources, data integration, data storage, metadata, data marts, data query and reporting tools, data warehouse management, and data security.

Unveiling the Definition of Data Warehouses: Looking into Bill Inmon’s Magicians Top Hat

In a nutshell, a data warehouse, as defined by Bill Inmon, is a subject-oriented, integrated, time-variant, and non-volatile collection of data that supports decision-making processes. It helps data magicians, like business and data analysts, make better-informed decisions, save time, enhance collaboration, and improve business intelligence. To choose the right data warehouse technology, consider your data needs, budget, compatibility with existing tools, scalability, and real-world user experiences.

Martech – The Technologies Behind the Marketing Analytics Stack: A Guide for Data Magicians

Explore the MarTech stack based on two different patterns: marketing application and data platform. The marketing application pattern focuses on tools for content management, email marketing, CRM, social media, and more, while the data platform pattern emphasises data collection, integration, storage, analytics, and advanced technologies. By understanding both perspectives, you can build a comprehensive martech stack that efficiently integrates marketing efforts and harnesses the power of data to drive better results.