Blogs

Because sharing is caring

Unveiling the Magic of Change Data Collection Patterns: Exploring Full Snapshot, Delta, CDC, and Event-Based Approaches

Change data collection patterns are like magical lenses that allow you to track data changes. The full snapshot pattern captures complete data at specific intervals for historical analysis. The delta pattern records only changes between snapshots to save storage. CDC captures real-time changes for data integration and synchronization. The event-based pattern tracks data changes triggered by specific events. Each pattern has unique benefits and use cases. Choose the right approach based on your data needs and become a data magician who stays up-to-date with real-time data insights!

Layered Data Architectures with Veronika Durgin

Dive into the Agile Data Podcast with Shane Gibson and Veronika Durgin as they explore the intricacies of layered data architecture, data vault modeling, and the evolution of data management. Discover key insights on balancing data democratisation with governance, the role of ETL processes, and the impact of organisational structure on data strategy.

How can data teams use Generative AI with Shaun McGirr

Discover the transformative impact of generative AI and large language models (LMS) in the world of data and analytics. This insightful podcast episode with Shane Gibson and Shaun McGirr delves into the evolution of data handling, from manual processes to advanced AI-driven automation. Uncover the vital role of AI in enhancing decision-making, business processes, and data democratization. Learn about the delicate balance between AI automation and human insight, the risks of over-reliance on AI, and the future of AI in data analytics. As the landscape of data analytics evolves rapidly, this episode is a must-listen for professionals seeking to adapt and thrive in an AI-driven future. Stay ahead of the curve in understanding how AI is reshaping the role of data professionals and transforming business strategies.

The challenge of parsing files from the wild

In this instalment of the AgileData DataOps series, we’re exploring how we handle the challenges of parsing files from the wild. To ensure clean and well-structured data, each file goes through several checks and processes, similar to a water treatment plant. These steps include checking for previously seen files, looking for matching schema files, queuing the file, and parsing it. If a file fails to load, we have procedures in place to retry loading or notify errors for later resolution. This rigorous data processing ensures smooth and efficient data flow.

The Magic of Customer Segmentation: Unlocking Personalised Experiences for Customers

Customer segmentation is the magical process of dividing your customers into distinct groups based on their characteristics, preferences, and needs. By understanding these segments, you can tailor your marketing strategies, optimize resource allocation, and maximize customer lifetime value. To unleash your customer segmentation magic, define your objectives, gather and analyze relevant data, identify key criteria, create distinct segments, profile each segment, tailor your strategies, and continuously evaluate and refine. Embrace the power of customer segmentation and create personalised experiences that enchant your customers and drive business success.

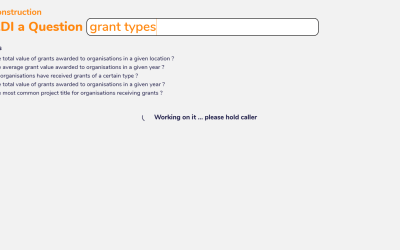

Fast Answers at Your Fingertips: Unveiling AgileData’s ‘Ask a Quick Question’ Feature

Immerse yourself in the magical world of data with AgileData’s ‘Ask a Quick Question’ capability. Perfectly designed for data analysts and business analysts who need to swiftly extract insights from data, this capability facilitates quick data queries and rapid exploratory data analysis.

The Hitchhikers guide to the Information Product Canvas

TD:LR In mid 2023 I was lucky enough to present at The Knowledge Gap on the Information Product Canvas. Watch The Information Product Canvas, is an innovative pattern designed to capture data requirements visually and repeatably, making it easier for both stakeholders...

Magical plumbing for effective change dates

We discuss how to handle change data in a hands-off filedrop process. We use the ingestion timestamp as a simple proxy for the effective date of each record, allowing us to version each day’s data. For files with multiple change records, we scan all columns to identify and rank potential effective date columns. We then pass this information to an automated rule, ensuring it gets applied as we load the data. This process enables us to efficiently handle change data, track data flow, and manage multiple changes in an automated way.

The patterns of Activity Schema with Ahmed Elsamadisi

In an insightful episode of the AgileData Podcast, Shane Gibson hosts Ahmed Elsamadisi to delve into the evolving world of data modeling, focusing on the innovative concept of the Activity Schema. Elsamadisi, with a rich background in AI and data science, shares his journey from working on self-driving cars to spearheading data initiatives at WeWork. The discussion centers on the pivotal role of data modeling in enhancing scalability and efficiency in data systems, with Elsamadisi highlighting the limitations of traditional models like star schema and data vault in addressing complex, modern data queries.

AgileData App

Explore AgileData features, updates, and tips

Network

Learn about consulting practises and good patterns for data focused consultancies

DataOps

Learn from our DataOps expertise, covering essential concepts, patterns, and tools

Data and Analytics

Unlock the power of data and analytics with expert guidance

Google Cloud

Imparting knowledge on Google Cloud's capabilities and its role in data-driven workflows

Journey

Explore real-life stories of our challenges, and lessons learned

Product Management

Enrich your product management skills with practical patterns

What Is

Describing data and analytics concepts, terms, and technologies to enable better understanding

Resources

Valuable resources to support your growth in the agile, and data and analytics domains

AgileData Podcast

Discussing combining agile, product and data patterns.

No Nonsense Agile Podcast

Discussing agile and product ways of working.

App Videos

Explore videos to better understand the AgileData App's features and capabilities.

I can write a bit of code faster

TD:LR To get data tasks done involves a lot more than just bashing out a few lines of code to get the data into a format that you can give it to your stakeholder/customer. Unless of course it really is a one off and...

The Focus Podcast – Agile Data Governance Patterns

Early in 2022 Shane Gibson was lucky enough to talk to the Focus podcast crew about agile governance in the data domain. Watch or listen to the episode.

ELT without persisted watermarks ? not a problem

We no longer need to manually track the state of a table, when it was created, when it was updated, which data pipeline last touched it …. all these data points are available by doing a simple call to the logging and bigquery api. Under the covers the google cloud platform is already tracking everything we need … every insert, update, delete, create, load, drop, alter is being captured

Three Agile Testing Methods – TDD, ATDD and BDD

In the word of agile, there are three common testing techniques that can be used to improve our testing practices and to assist with enabling automated testing.

Scaling data teams – Tammy Leahy

Shane Gibson chats to Tammy Leahy about how she helped scale the data teams she leads.

Data Products

Join Shane and guest Eric Broda as they discuss data products.

Using a manifest concept to run data pipelines

TD:LR … you don’t always need to use DAGs to orchestrate Previously we talked about how we use an ephemeral Serverless architecture based on Google Cloud Functions and Google PubSub Messaging to run our customer data...

“Serverless” Data Processing

TD:LR When we dreamed up AgileData and started white-boarding ideas around architecture, one of the patterns we were adamant that we would leverage, would be Serverless. This posts explains why we were adamant and what...

A Data Engineer an Agile Coach and a Fish walk into a bar…

This is the first of a series of articles detailing how we built a platform to make data fun and remove complexity for our users

Analysts can model democratising data modeling

In 2022 Shane Gibson was lucky enough to present “Analysts can model democratising data modeling” at the Knowledge Gap Conference

Watch the presentation.

Data Mesh Podcast – Finding useful and repeatable patterns for data

TD:LR I talk to Scott Hirleman on the Data Mesh Radio podcast on my thoughts on Data Mesh and the need for resuable patterns in the data & analytics domain. My opinion on Data Mesh I am not a fan of the current...

The Enchanting World of Data Magicians: Marketing Analytics vs. Product Analytics

Marketing Analytics involves analysing data from various channels, such as social media, email, and websites, to assess the performance of marketing efforts.

Product Analytics focuses on understanding and improving user experience and satisfaction with digital products or services.

What is Data Lineage?

TD:LR AgileData mission is to reduce the complexity of managing data. In the modern data world there are many capability categories, each with their own specialised terms, technologies and three letter acronyms. We...

Agile Security

Join Shane and guest Laura Bell as they discuss how you can eat the security elephant in an agile way.

Data Mesh 4.0.4

TD:LR Data Mesh 4.0.4 is only available for a very short time. please ensure you scroll to the bottom of the article to understand the temporal nature of the Data Mesh 4.0.4 approach.This article was published on 1st...

Abracadabra! Unravel the Mysteries of Data Catalog

Data catalogs are comprehensive inventories of an organisations data assets, helping data analysts and information consumers to quickly find, understand, and utilise relevant information. They foster collaboration, maintain data governance, and ensure compliance.

Catalog & Cocktails Podcast – agile in the data domain

Early in 2022 Shane Gibson was lucky enought to talk to the Catalog and Cocktails podcast crew about agile in the data domain. Watch or listen to the episode.

Whats the hottest new data thing in 2022 — Data Mesh or Metric Store

There is a lot of vendor washing going on A lot of data vendors are vendor washing their technologies to pretend they enable "Data Mesh" as they are punting on Data Mesh being the new thing for 2022. I think they are...

Data Observability Uncovered: A Magical Lens for Data Magicians

Data observability provides comprehensive visibility into the health, quality, and reliability of your data ecosystem. It dives deeper than traditional monitoring, examining the actual data flowing through your pipelines. With tools like data lineage tracking, data quality metrics, and anomaly detection, data observability helps data magicians quickly detect and diagnose issues, ensuring accurate, reliable data-driven decisions.

Analyst vs Analytics Engineer – Benn Stancil

Join Shane and guest Benn Stancil as they discuss the difference between the age old analyst role and the new emerging role of analytics engineer (amongst a few other interesting things)

3 agile architecture things

Join Shane and guest Brian McMillian as they discuss the art of architecture in an agile data world. We discuss 3 things: 1. the 4x approach2. data vault3. everything is code